Update 8/5/16: Be sure to also check out today's blog post on this issue, including links to Daily News and NY Post articles and today's NYSAPE press release.

The results of the state exams were interesting, to say the least. Statewide, the opt out rate grew with 25,000 fewer students taking these tests, and the non-participation rate statewide increased from 20% 22%. Fully 95% of districts in the state did NOT make the threshold of 95% participation. For more opt out stats, see the NYSAPE press release here.

As for the results, take out the champagne, celebrate along with Mayor de Blasio and Chancellor Farina; state and city proficiency rates were up! “We have seen incredible improvement on these exams,” Schools Chancellor Carmen Fariña said. Incredible is right, in the literal meaning of the word. See the NY proficiency rates in English, showing jumps of nearly 11 points in 3rd grade reading since last year and ten points in 4th grade reading? These leaps just aren't believable -- and anyone who believes otherwise is really taking a leap of faith. The increases in math were big too, if not quite as dramatic.

Only a few problems: the state tests this year were shorter, they were given untimed, and the translation from raw scores to proficiency levels radically eased. We have apparently entered a new era of test score inflation.

See what happened in NYC schools between 2002-2009, with especially sharp gains in math scores between 2002 and 2009:

Similar to this year, the proficiency gains were also dramatic in ELA, from 51% of NYC students proficient in 2006 rising to 69% in 2009 ( though the DOE charts are no longer posted.)

Predictably, Bloomberg crowed and rode the wave to re-election and the renewal of mayoral control:

At a news conference at a school in the Bronx, Mr. Bloomberg trumpeted the results as evidence that mayoral control had produced revolutionary improvements and brought city students within spitting distance of state averages after years of mediocrity. “Our reforms are working,” Mr. Bloomberg said. “Our schools are heading in the right direction.” Even Randi Weingarten, the president of the teachers’ union, lavished praise on the mayor and his chancellor. “What we’ve seen in the last seven years is a cohesion and a stability and resources that we did not have beforehand,” she said.

Only as many of us knew then, and was eventually confirmed, the gains weren't real. The improvements were not matched by the results on the NAEP, the more reliable national exams, and the tests and the scoring were shown to be easier each year.

Only as many of us knew then, and was eventually confirmed, the gains weren't real. The improvements were not matched by the results on the NAEP, the more reliable national exams, and the tests and the scoring were shown to be easier each year.Erin Einhorn, then a reporter at the Daily News, did an eye-opening experiment in 2007. She gave the 2002 and 2005 4th grade math tests to a bunch of 4th and 5th graders taking summer school at Brooklyn College. The kids did far better on the 2005 exams, with 24 out of 34 getting higher scores, and only eight students getting lower ones. The p-values, or percent of questions answered correctly on field tests, and thus easier to get right, also increased rapidly between 2002 and 2005.

The test score inflation continued up through 2009, with sharp increases in proficiency similar to this year due to more predictable and easier tests. The scoring also got easier; Fred Smith, testing expert, and others discovered that random guesses would yield a Level 2 on the reading exam.

When the bubble finally burst in 2010, and the scores were re-calibrated, this is what occurred:

So how do we know we have entered another era of test score inflation?

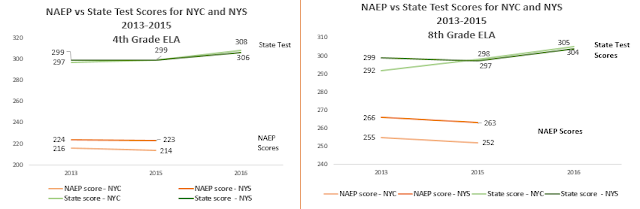

When one compares NYC and NY state scale scores on the more reliable NAEP exams between 2013 and 2015, the trend lines don't match up. On the NAEPs, NY State and NYC 4th grade ELA, average scores declined slightly while on the state tests they increased. Because the NAEPs are only given every two years, we have no results for 2016. (The state scores are in dark green and dark orange; the city in lighter colors.)

But what about the claim made by DOE and the media that for the first time, NYC scores matched the state's? See for example, this headline from Chalkbeat: NYC reading scores leap, matching state average for first time. This may have been true in proficiency rates, but certainly not in scores, which are considered a more reliable way to track achievement.

If you look at the charts above, you can easily see that NYC's average scores matched the state's last year in 4th grade reading and surpassed the state in 8th grade ELA. In addition, NYC matched the state's average scores in 4th grade math in 2013, and in 8th grade math in 2015.

Yet as we see NYC did not match the State scores on the NAEPs, in 2013 or 2015, in any subject. This creates even more doubt on the reliability of the state metrics and provides evidence that we have entered a new era of test score inflation.

Yet another reason to doubt ANY comparisons between city and state scores or proficiency rates is that 95% of the state's districts had more than 5% opt out rates -- and the 95% participation rate is supposedly required for accurate conclusions. Meanwhile, NYC's opt out rate remained relatively low at 2.4% -- which makes any comparisons between NYC and the rest of the state even more questionable.

But perhaps the smoking gun is this: the state cut scores much lower this year, with far lower raw scores translated into higher scale scores which were then equated with higher Proficiency levels, meaning Level 3 or above. [Note: this may not be called cut scores, which according to some test experts refers to the proficiency levels set to the scale scores, rather than to the raw scores. In any case, the effect is the same.]

See the analysis done by Michael O'Donnell of New Paltz Board of Education and checked by NYSAPE members. See also the chart below -- showing the systematic lowering of the number and percent of raw scores equated to proficiency out of the total possible in 11 out of the 12 exams. [Clarification: the numbers below in the boxes are the percentage of points out of the total number of points needed to get a Level 3. For example, in 3rd grade ELA, a student had to get 34 points out of 55 (62%) to get a Level 3 in 2015, compared to 28 out of 47 (60%) in 2016. And so on. The state data showing the conversion of raw scores to scale scores and the conversion of scale scores to proficiency levels is here; feel free to do your own analysis! ]

The fact that the number of points needed for a student to be considered proficient was so much lower this year is very fishy, and only justifiable if the questions were much harder. Yet few if any teachers reported that the exams were more difficult this year, and the Commissioner herself insisted the exams were "comparably rigorous" to last year. Indeed, she had tried to mollify parents who complained they were too long by shortening the exams and giving them untimed -- both changes that would be apt to boost results, all things being equal.

What is somewhat different from the last time we experienced test score inflation is that the NYSED presentation to the public had a clear disclaimer that any trends in test scores over time could not be ascertained. See this, from slide 5 of the NYSED powerpoint:

But then the Commissioner proceeded to ignore this disclaimer, and showed charts showing gains each year from 2013-2016, albeit with a tiny asterisk at the bottom, that said:

*Due to changes in the 2016 exams, the proficiency rates from exams prior to 2016 are not directly comparable to the 2016 proficiency rates

What to make of these logical fallacies? What to make of the fact that not only the Commissioner but the NYC press corps almost uniformly glossed over the contradictions in the NYSED presentation, by omitting any mention of the state's history of test score inflation and confining any reservations to the 7th or 8th paragraph of their stories? Indeed, the only reporters to confront the glaring unreliability of the data head-on wrote worked for the Buffalo and Rochester papers.

There

are four ways to artificially boost results on exams:

2. Allow more time to take them

3. Make the questions easier

4. Change the cut scores and/or translation from raw scores to performance levels.

It appears that the state made at least three out of the four changes listed

above. We

won’t know if the questions were harder or easier until the state releases the P-values and provides other technical details.

So yet again, we have a Mayor using these unreliable and possibly invalid test results to "prove" that Mayoral control works and using it for his political advantage. Again, we have a State Commissioner who says the results show that the state's educational "reforms" are leading to more learning. Again, the NYC Chancellor and a UFT president are drinking the Koolaid, to justify their preferred policies. This time, in addition, the charter schools are also touting the results to "prove" their superiority. Will we have to wait years until a new Commissioner until the state admits the truth, as we did last time when Rick Mills was replaced by David Steiner?

Following the Chancellor Farina's press conference, a journalist wrote me, "Twilight zone. ...Collective self-delusion with the kids used as political chess pieces." It appears we are living through Groundhog's Day, over and over again. As the well-known saying goes, “Those who cannot remember the past are condemned to repeat it."

3 comments:

From the first day of school last year, parents were bombarded with propaganda from the DOE touting all the wonderful changes to the tests that would make them more meaningful and friendly for our children. All year long we received word from on high how important these tests are and parents, teachers and administrators were told that opt out was not acceptable. After constant bully and threats about loss of funding, parents reluctantly had their children sit for the tests, afraid they would hurt their schools. Of course the test scores were manipulated. How would the DOE maintain the facade of validity to their rhetoric? How could the state justify to parents that the Common Core was doing it's job and continue to pour millions of dollars into a testing regime that is useless? How could the state be transparent with this failure when the charter lobby has pushed an agenda they have decided, much against the will of public schools? The Emperor and his Chancellor have no clothes. It's disgusting they would put millions of children, teachers and schools through this (expensive) charade. Shame on them, but it's in line with all the other manipulations we are experiencing as a nation....

Great post Leonie and so right. Where to now?

As Leonie Haimson points out NYS testing has been a shell game for years. In 2013, NYS rushed to introduce common-core aligned tests before PARCC itself was ready to roll out its own assessment—that would come in the 2014/2015 school year-- before teachers had had any meaningful training in the new standards, and before students had had much exposure to them. The decision was a disaster, creating a backlash among parents, principals and educators of all stripes. (And those tests were in addition to the introduction of the MOSL an entirely separate round of tests, the sole purpose of which was teacher evaluations.) Ever since that hasty 2013 roll out, the “common core” tests have changed substantially every year. In 2013 and 2014 I received copies of the 6, 7 and 8 grade ELA tests and wrote about them here: https://andreagabor.com/2013/06/03/unwrapping-new-york-states-new-common-core-tests/

And here: https://andreagabor.com/2014/06/11/unwrapping-new-york-states-latest-common-core-tests/

There is no way you can make an apples-to-apples comparison of these tests. Maybe rotten apples to rotten oranges…

Post a Comment